[Click here for a PDF of this post with nicer formatting]

Question: Time evolution of spin half probability and dispersion ([1] pr. 2.3)

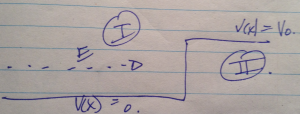

A spin \( 1/2 \) system \( \BS \cdot \ncap \), with \( \ncap = \sin \beta \xcap + \cos\beta \zcap \), is in state with eigenvalue \( \Hbar/2 \), acted on by a magnetic field of strength \( B \) in the \( +z \) direction.

(a)

If \( S_x \) is measured at time \( t \), what is the probability of getting \( + \Hbar/2 \)?

(b)

Evaluate the dispersion in \( S_x \) as a function of t, that is,

\begin{equation}\label{eqn:spinTimeEvolution:20}

\expectation{\lr{ S_x – \expectation{S_x}}^2}.

\end{equation}

(c)

Check your answers for \( \beta \rightarrow 0, \pi/2 \) to see if they make sense.

Answer

(a)

The spin operator in matrix form is

\begin{equation}\label{eqn:spinTimeEvolution:40}

\begin{aligned}

S \cdot \ncap

&=

\frac{\Hbar}{2} \lr{ \sigma_z \cos\beta + \sigma_x \sin\beta } \\

&=

\frac{\Hbar}{2} \lr{ \begin{bmatrix} 1 & 0 \\ 0 & -1 \\ \end{bmatrix} \cos\beta + \begin{bmatrix} 0 & 1 \\ 1 & 0 \\ \end{bmatrix} \sin\beta } \\

&=

\frac{\Hbar}{2}

\begin{bmatrix}

\cos\beta & \sin\beta \\

\sin\beta & -\cos\beta

\end{bmatrix}.

\end{aligned}

\end{equation}

The \( \ket{S \cdot \ncap ; + } \) eigenstate is found from

\begin{equation}\label{eqn:spinTimeEvolution:60}

\lr{ S \cdot \ncap – \Hbar/2}

\begin{bmatrix}

a \\

b

\end{bmatrix}

= 0,

\end{equation}

or

\begin{equation}\label{eqn:spinTimeEvolution:80}

\begin{aligned}

0

&=

\lr{ \cos\beta – 1 } a + \sin\beta b \\

&=

\lr{ -2 \sin^2(\beta/2) } a + 2 \sin(\beta/2) \cos(\beta/2) b \\

&=

\lr{ – \sin(\beta/2) } a + \cos(\beta/2) b,

\end{aligned}

\end{equation}

or

\begin{equation}\label{eqn:spinTimeEvolution:100}

\ket{ S \cdot \ncap ; + }

=

\begin{bmatrix}

\cos(\beta/2) \\

\sin(\beta/2) \\

\end{bmatrix}.

\end{equation}

The Hamiltonian is

\begin{equation}\label{eqn:spinTimeEvolution:120}

H

= – \frac{e B}{m c} S_z

= – \frac{e B \Hbar}{2 m c} \sigma_z,

\end{equation}

so the time evolution operator is

\begin{equation}\label{eqn:spinTimeEvolution:140}

U

= e^{-i H t/\Hbar}

= e^{ \frac{i e B t }{2 m c} \sigma_z }.

\end{equation}

Let \( \omega = e B/(2 m c) \), so

\begin{equation}\label{eqn:spinTimeEvolution:160}

\begin{aligned}

U

&=

e^{i \sigma_z \omega t} \\

&=

\cos(\omega t) + i \sigma_z \sin(\omega t) \\

&=

\begin{bmatrix}

1 & 0 \\

0 & 1

\end{bmatrix}

\cos(\omega t)

+

i \begin{bmatrix} 1 & 0 \\ 0 & -1 \\ \end{bmatrix} \sin(\omega t) \\

&=

\begin{bmatrix}

e^{i \omega t} & 0 \\

0 & e^{-i \omega t}

\end{bmatrix}.

\end{aligned}

\end{equation}

The time evolution of the initial state is

\begin{equation}\label{eqn:spinTimeEvolution:180}

\begin{aligned}

\ket{S \cdot \ncap ; + }(t)

&=

U \ket{S \cdot \ncap ; + }(0) \\

&=

\begin{bmatrix}

e^{i \omega t} & 0 \\

0 & e^{-i \omega t}

\end{bmatrix}

\begin{bmatrix}

\cos(\beta/2) \\

\sin(\beta/2) \\

\end{bmatrix} \\

&=

\begin{bmatrix}

\cos(\beta/2) e^{i \omega t} \\

\sin(\beta/2) e^{-i \omega t} \\

\end{bmatrix}.

\end{aligned}

\end{equation}

The probability of finding the state in \( \ket{S \cdot \xcap ; + } \) at time \( t \) (i.e. measuring \( S_x \) and finding \( \Hbar/2 \)) is

\begin{equation}\label{eqn:spinTimeEvolution:200}

\begin{aligned}

\Abs{\braket{S \cdot \xcap ; + }{S \cdot \ncap ; + }}^2

&=

\Abs{\inv{\sqrt{2}}

\begin{bmatrix}

1 & 1 \\

\end{bmatrix}

\begin{bmatrix}

\cos(\beta/2) e^{i \omega t} \\

\sin(\beta/2) e^{-i \omega t} \\

\end{bmatrix}

}^2 \\

&=

\inv{2}

\Abs{

\cos(\beta/2) e^{i \omega t} +

\sin(\beta/2) e^{-i \omega t} }^2 \\

&=

\inv{2} \lr{ 1 + 2 \cos(\beta/2) \sin(\beta/2) \cos(2 \omega t) } \\

&=

\inv{2} \lr{ 1 + \sin(\beta) \cos( 2 \omega t) }.

\end{aligned}

\end{equation}

(b)

To calculate the dispersion first note that

\begin{equation}\label{eqn:spinTimeEvolution:300}

S_x^2

= \lr{ \frac{\Hbar}{2} }^2 \begin{bmatrix} 0 & 1 \\ 1 & 0 \\ \end{bmatrix}^2

= \lr{ \frac{\Hbar}{2} }^2,

\end{equation}

so only the first order expectation is non-trivial to calculate. That is

\begin{equation}\label{eqn:spinTimeEvolution:320}

\begin{aligned}

\expectation{S_x}

&=

\frac{\Hbar}{2}

\begin{bmatrix}

\cos(\beta/2) e^{-i \omega t} &

\sin(\beta/2) e^{i \omega t}

\end{bmatrix}

\begin{bmatrix} 0 & 1 \\ 1 & 0 \\ \end{bmatrix}

\begin{bmatrix}

\cos(\beta/2) e^{i \omega t} \\

\sin(\beta/2) e^{-i \omega t} \\

\end{bmatrix} \\

&=

\frac{\Hbar}{2}

\begin{bmatrix}

\cos(\beta/2) e^{-i \omega t} &

\sin(\beta/2) e^{i \omega t}

\end{bmatrix}

\begin{bmatrix}

\sin(\beta/2) e^{-i \omega t} \\

\cos(\beta/2) e^{i \omega t} \\

\end{bmatrix} \\

&=

\frac{\Hbar}{2}

\sin(\beta/2) \cos(\beta/2) \lr{ e^{-2 i \omega t} + e^{ 2 i \omega t} } \\

&=

\frac{\Hbar}{2} \sin\beta \cos( 2 \omega t ).

\end{aligned}

\end{equation}

This gives

\begin{equation}\label{eqn:spinTimeEvolution:340}

\boxed{

\expectation{(\Delta S_x)^2}

=

\lr{ \frac{\Hbar}{2} }^2 \lr{ 1 – \sin^2\beta \cos^2( 2 \omega t ) }.

}

\end{equation}

(c)

For \( \beta = 0 \), \( \ncap = \zcap \), and \( \beta = \pi/2 \), \( \ncap = \xcap \). For the first case, the state is in an eigenstate of \( S_z \), so must evolve as

\begin{equation}\label{eqn:spinTimeEvolution:220}

\ket{S \cdot \ncap ; + }(t) = \ket{S \cdot \ncap ; + }(0) e^{i \omega t}.

\end{equation}

The probability of finding it in state \( \ket{S \cdot \xcap ; + } \) is therefore

\begin{equation}\label{eqn:spinTimeEvolution:240}

\begin{aligned}

\Abs{

\inv{\sqrt{2}}

\begin{bmatrix}

1 & 1

\end{bmatrix}

\begin{bmatrix}

e^{i \omega t} \\

0

\end{bmatrix}

}^2

&=

\inv{2} \Abs{ e^{i\omega t} }^2 \\

&=

\inv{2} \\

&=

\inv{2} \lr{ 1 + \sin(0) \cos(2 \omega t) }.

\end{aligned}

\end{equation}

This matches \ref{eqn:spinTimeEvolution:200} as expected.

For \( \beta = \pi/2 \) we have

\begin{equation}\label{eqn:spinTimeEvolution:260}

\begin{aligned}

\ket{S \cdot \xcap ; + }(t)

&=

\inv{\sqrt{2}}

\begin{bmatrix}

e^{i \omega t} & 0 \\

0 & e^{-i \omega t}

\end{bmatrix}

\begin{bmatrix}

1 \\

1

\end{bmatrix} \\

&=

\inv{\sqrt{2}}

\begin{bmatrix}

e^{i \omega t} \\

e^{-i \omega t}

\end{bmatrix}.

\end{aligned}

\end{equation}

The probability for the \( \Hbar/2 \) \( S_x \) measurement at time \( t \) is

\begin{equation}\label{eqn:spinTimeEvolution:280}

\begin{aligned}

\Abs{

\inv{2}

\begin{bmatrix}

1 & 1

\end{bmatrix}

\begin{bmatrix}

e^{i \omega t} \\

e^{-i \omega t}

\end{bmatrix}

}^2

&=

\inv{4} \Abs{ e^{i \omega t} + e^{-i \omega t} }^2 \\

&=

\cos^2(\omega t) \\

&=

\inv{2}\lr{ 1 + \sin(\pi/2) \cos( 2 \omega t )}.

\end{aligned}

\end{equation}

Again, this matches the expected value.

For the dispersions, at \( \beta = 0 \), the dispersion is

\begin{equation}\label{eqn:spinTimeEvolution:360}

\lr{\frac{\Hbar}{2}}^2

\end{equation}

This is the maximum dispersion, which makes sense since we are measuring \( S_x \) when the initial state is \( \ket{S \cdot \zcap ; + } \). For \( \beta = \pi/2 \) the dispersion is

\begin{equation}\label{eqn:spinTimeEvolution:380}

\lr{\frac{\Hbar}{2}}^2 \sin^2 ( 2 \omega t ).

\end{equation}

This starts off as zero dispersion (because the initial state is \( \ket{ S \cdot \xcap ; + } \), but then oscillates.

References

[1] Jun John Sakurai and Jim J Napolitano. Modern quantum mechanics. Pearson Higher Ed, 2014.