In a recent Brian Keating podcast, he asked people to comment if they had their own theory of physics.

I’ve done a lot of exploration of conventional physics, both on my own, and with some in class studies (non-degree undergrad physics courses at UofT, plus grad QM and QFTI), but I don’t have my own personal theories of physics. It’s enough of a challenge to figure out the existing theories without making up your own\({}^{1}\).

However, I have had one close encounter with an alternate physics theory, as I had a classmate in undergrad QMI (phy356) that had a personal “Aether” theory of relativity. He shared that theory with me, but it came in the form of about 50 pages of dense text without equations. For all intents and purposes, this was a theory that was written in an entirely different language than the rest of physics. To him, it was all self evident, and he got upset with the suggestion of trying to mathematize it. A lot of work and thought went into that theory, but it is work that has very little chance of paying off, since it was packaged in a form that made it unpalatable to anybody who is studying conventional physics. There is also a great deal of work that would be required to “complete” the theory (presuming that could be done), since he would have to show that his theory is not inconsistent with many measurements and experiments, and would not predict nonphysical phenomena. That was really an impossible task, which he would have found had he attempted to do so. However, instead of attempting to do that work, he seemed to think that the onus should fall on others to do so. He had done the work to write what he believed to be a self consistent logical theory that was self evidently true, and shouldn’t have to do anything more.

It is difficult to fully comprehend how he would have arrived at such certainty about his Aether theory, when he did not have the mathematical sophistication to handle the physics found in the theories that he believed his should supplant. However, in his defence, there are some parts of what I imagine were part of his thought process that I can sympathize with. The amount of knowledge required to understand the functioning of a even a simple digital watch (not to mention the cell “phone” computers that we now all carry) is absolutely phenomenal. We are surrounded by so much sophisticated technology that understanding the mechanisms behind it all is practically unknowable. Much of the world around is us is effectively magic to most people, even those with technical sophistication. Should there be some sort of catastrophe that wipes out civilization, requiring us to relearn or redevelop everything from first principles, nobody really has the breadth required to reconstruct the world around us. It is rather humbling to ponder that.

One way of coping with the fact that it is effectively impossible to know how everything works is to not believe in any consensus theories — period. I think that is the origin of the recent popularization of flat earth models. I think this was a factor in my classmate’s theory, as he also went on to believe that quantum mechanics was false (or also believed that when I knew him, but never stated it to me.) People understand that it is impossible to know everything required to build their own satellites, imaging systems, rockets, et-al, (i.e. sophisticated methods of disproving the flat earth theory) and decide to disbelieve everything that they cannot personally prove. That’s an interesting defence mechanism, but takes things to a rather extreme conclusion.

I have a lot of sympathy for those that do not believe in consensus theories. Without such disbelief I would not have my current understanding of the world. It happens that the prevailing consensus theory that I knew growing up was that of Scientology. Among the many ideas that one finds in Scientology is a statement that relativity is wrong\({}^2\). It has been too many years for me to accurately state the reasons that Hubbard stated that relativity was incorrect, but I do seem to recall that one of the arguments had to do with the speed of light being non-constant when bent by a prism \({}^3\). I carried some Scientology derived skepticism of relativity into the undergrad “relativistic electrodynamics“\({}^4\) course that I took back around 2010, but had I not been willing to disregard the consensus beliefs that I had been exposed to up to that point in time, I would not have learned anything from that class. Throwing away beliefs so that you can form your own conclusions is the key to being able to learn and grow.

I would admit to still carrying around baggage from my early indoctrination, despite not having practised Scientology for 25+ years. This baggage spans multiple domains. One example is that I am not subscribed to the religious belief that government and police are my friends. It is hard to see your father, whom you love and respect, persecuted, and not come away with disrespect for the persecuting institutions. I now have a rough idea of what Dad back in the Scientology Guardian’s Office did that triggered the ire of the Ontario crown attorneys \({}^5\). However, that history definitely colored my views and current attitudes. In particular, I recognize that back history as a key factor that pushed me so strongly in a libertarian direction. The libertarian characterization of government as an entity that infringes on personal property and rights seems very reasonable, and aligns perfectly with my experience \({}^6\).

A second example of indoctrination based disbelief that I recognize that I carry with me is not subscribing to the current popular cosmological models of physics. The big bang, and the belief that we know to picosecond granularity how the universe was at it’s beginning seems to me very religious. That belief requires too much extrapolation, and it does not seem any more convincing to me than the Scientology cosmology. The Scientology cosmology is somewhat ambiguous, and contains both a multiverse story and a steady state but finite model. In the steady state aspect of that cosmology, the universe that we inhabit is dated with an age of 76 trillion years, but I don’t recall any sort of origin story for the beginning portion of that 76 trillion. Given the little bits of things that we can actually measure and observe, I have no inclination to sign up for the big bang testiment any more than any other mythical origin story. Thankfully, I can study almost anything that has practical application in physics or engineering and no amount of belief or disdain in the big bang or other less popular “physics” cosmologies makes any difference. All of these, whether they be the big bang, cyclic theories, multiverses (of the quantum, thetan-created \({}^7\), or inflationary varieties), or even the old Scientology 76 trillion years old cosmology of my youth, cannot be measured, proven or disproved. Just about any cosmology has no impact on anything real.

This throw it all out point of view of cosmology is probably a too cynical and harsh treatment of the subject. It is certainly not the point of view that most practising physicists would take, but it is imminently practical. There’s too much that is unknowable, so why waste time on the least knowable aspects of the unknowable when there are so many concrete things that we can learn.

Footnotes:

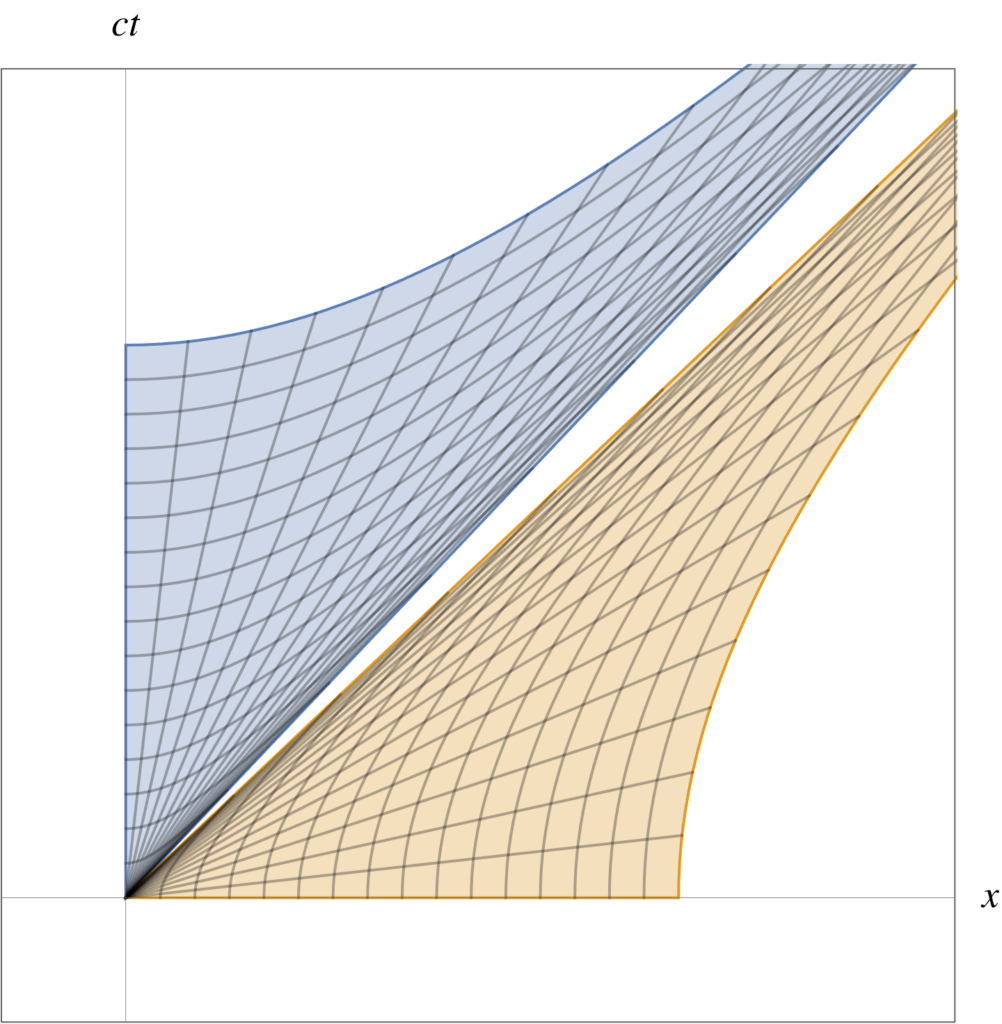

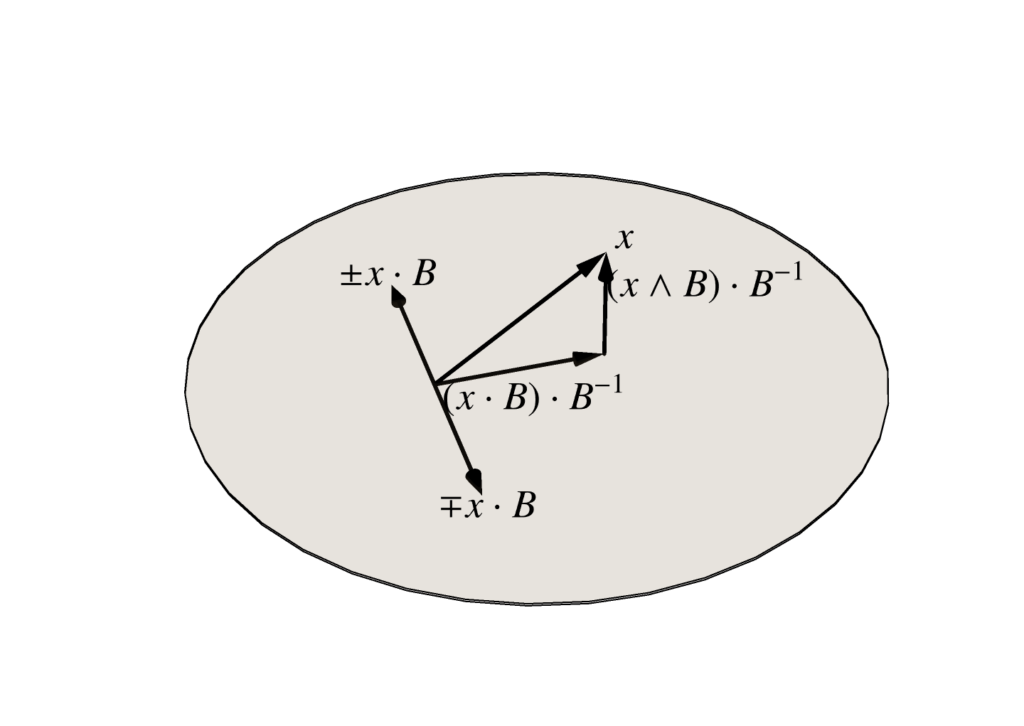

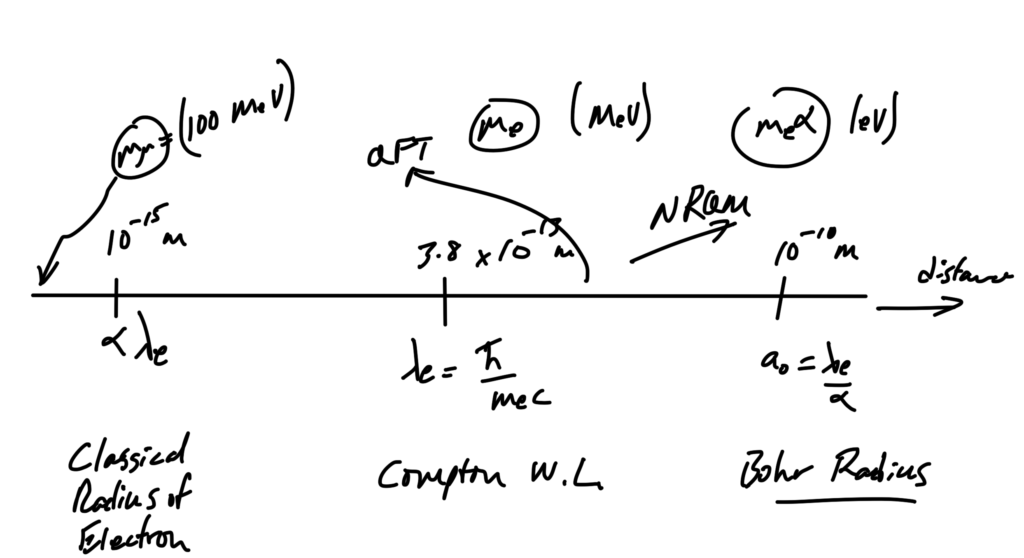

[1] The closest that I have come to my own theory of physics is somewhat zealous advocacy for the use of real Clifford algebras in engineering and physics (aka. geometric algebra.) However, that is not a new theory, it is just a highly effective way to compactly represent many of the entities that we encounter in more clumsy forms in conventional physics.

[2] Hubbard’s sci-fi writing shows that he had knowledge of special relativistic time-dilation, and length-contraction effects. I seem to recall that Lorentz transformations were mentioned in passing (on either the Student hat course, or in the “PDC” lectures). I don’t believe that Hubbard had the mathematical sophistication to describe a Lorentz transformation in a quantitative sense.

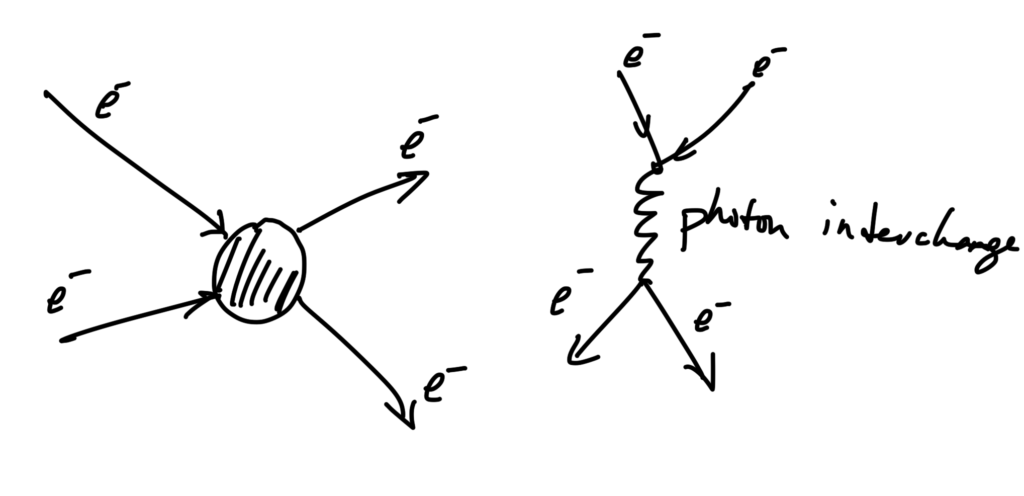

[3] The traversal of light through matter is a complex affair, considerably different from light in vacuum, where the relativistic constancy applies. It would be interesting to have a quantitative understanding of the chances of a photon getting through a piece of glass without interacting (absorption and subsequent time delayed spontaneous remission of new photons when the lattice atoms drop back into low energy states.) There are probably also interactions of photons with the phonons of the lattice itself, and I don’t know how those would be quantified. However, in short, I bet there is a large chance that most of the light that exits a transparent piece of matter is not the same light that went in, as it is going to come out as photons with different momentum, polarization, frequency, and so forth. If we measure characteristics of a pulse of light going into and back out of matter, it’s probably somewhat akin to measuring the characteristics of a clementine orange that is thrown at a piece of heavy chicken wire at fastball speeds. You’ll get some orange peel, seeds, pulp and other constituent components out the other side of the mesh, but shouldn’t really consider the inputs and the outputs to be equivalent entities.

[4] Relativistic electrodynamics was an extremely redundant course title, but was used to distinguish the class from the 3rd year applied electrodynamics course that had none of the tensor, relativistic, Lagrangian, nor four-vector baggage.

[5] Some information about that court case is publicly available, but it would be interesting to see whether I could use the Canadian or Ontario equivalent to the US freedom of information laws to request records from the Ontario crown and the RCMP about the specifics of Dad’s case. Dad has passed, and was never terribly approachable about the subject when I could have asked him personally. I did get his spin on the events as well as the media spin, and suspect that the truth is somewhere in between.

[6] This last year will probably push many people towards libertarian-ism (at least the subset of people that are not happy to be conforming sheep, or are too scared not to conform.) We’ve had countless examples of watching evil bastards in government positions of power impose dictatorial and oppressive covid lockdowns on the poorest and most unfortunate people that they supposedly represent. Instead, we see the corruption at full scale, with the little guys clobbered, and this covid lockdown scheme essentially being a way to efficiently channel money into the pockets of the rich. The little guys loose their savings, livelihoods, and get their businesses shut down by fat corrupt bastards that believe they have the authority to decide whether or not you as an individual are essential. The fat bastards that have the stamp of government authority do not believe that you should have the right to make up your own mind about what levels of risk are acceptable to you or your family.

[7] In Scientology, a sufficiently capable individual is considered capable of creating their own universes, independent of the 76 trillion year old universe that we currently inhabit. Thetan is the label for the non-corporal form of that individual (i.e. what would be called the spirit or the soul in other religions.)