[Click here for a PDF of this post with nicer formatting]

Disclaimer

Peeter’s lecture notes from class. These may be incoherent and rough.

Backward Euler method

Discretized time dependent partial differential equations were seen to have the form

\begin{equation}\label{eqn:multiphysicsL14:20}

G \Bx(t) + C \dot{\Bx}(t) = B \Bu(t),

\end{equation}

where \( G, C, B \) are matrices, and \( \Bu(t) \) is a vector of sources.

The backward Euler method augments \ref{eqn:multiphysicsL14:20} with an initial condition. For a one dimensional system such an initial condition could a zero time specification

\begin{equation}\label{eqn:multiphysicsL14:40}

G x(t) + C \dot{x}(t) = B u(t),

\end{equation}

\begin{equation}\label{eqn:multiphysicsL14:60}

x(0) = x_0

\end{equation}

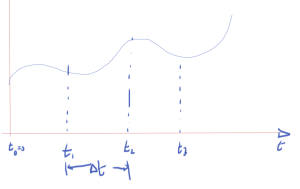

Discretizing time as in fig. 1.

The discrete derivative, using a backward difference, is

\begin{equation}\label{eqn:multiphysicsL14:80}

\dot{x}(t = t_n) \approx \frac{ x_n – x_{n-1} }{\Delta t}

\end{equation}

Evaluating \ref{eqn:multiphysicsL14:40} at \( t = t_n \) is

\begin{equation}\label{eqn:multiphysicsL14:100}

G x_n + C \dot{x}(t = t_n) = B u(t_n),

\end{equation}

or approximately

\begin{equation}\label{eqn:multiphysicsL14:120}

G x_n + C \frac{x_n – x_{n-1}}{\Delta t} = B u(t_n).

\end{equation}

Rearranging

\begin{equation}\label{eqn:multiphysicsL14:140}

\lr{ G + \frac{C}{\Delta t} } x_n = \frac{C}{\Delta t} x_{n-1}

+

B u(t_n).

\end{equation}

Assuming that matrices \( G, C \) are constant, and \( \Delta t \) is fixed, a matrix inversion can be avoided, and a single LU decomposition can be used. For \( N \) sampling points (not counting \( t_0 = 0 \)), \( N \) sets of backward and forward substitutions will be required to compute \( x_1 \) from \( x_0 \), and so forth.

Backwards Euler is an implicit method.

Recall that the forward Euler method gave

\begin{equation}\label{eqn:multiphysicsL14:160}

x_{n+1} =

x_n \lr{ I – C^{-1} \Delta t G }

+ C^{-1} \Delta t B u(t_n)

\end{equation}

This required

- \( C \) must be invertible.

- \( C \) must be cheap to invert, perhaps \( C = I \), so that

\begin{equation}\label{eqn:multiphysicsL14:180}

x_{n+1} =

\lr{ I – \Delta t G } x_n

+ \Delta t B u(t_n)

\end{equation} - This is an explicit method

- This can be cheap but unstable.

Trapezoidal rule (TR)

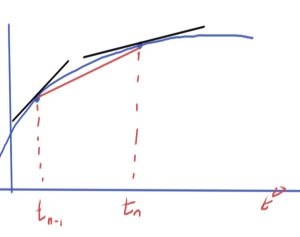

The derivative can be approximated using an average of the pair of derivatives as illustrated in fig. 2.

\begin{equation}\label{eqn:multiphysicsL14:200}

\frac{x_n – x_{n-1}}{\Delta t} \approx \frac{

\dot{x}(t_{n-1})

+

\dot{x}(t_{n})

}

{2}.

\end{equation}

Application to \ref{eqn:multiphysicsL14:40} for \( t_{n-1}, t_n \) respectively gives

\begin{equation}\label{eqn:multiphysicsL14:220}

\begin{aligned}

G x_{n-1} + C \dot{x}(t_{n-1}) &= B u(t_{n-1}) \\

G x_{n} + C \dot{x}(t_{n}) &= B u(t_{n}) \\

\end{aligned}

\end{equation}

Averaging these

\begin{equation}\label{eqn:multiphysicsL14:240}

G \frac{ x_{n-1} + x_n }{2} + C

\frac{

\dot{x}(t_{n-1})

+\dot{x}(t_{n})

}{2}

= B

\frac{u(t_{n-1})

+

u(t_{n}) }{2},

\end{equation}

and inserting the trapezoidal approximation

\begin{equation}\label{eqn:multiphysicsL14:280}

G \frac{ x_{n-1} + x_n }{2}

+

C

\frac{

x_{n} –

x_{n-1}

}{\Delta t}

= B

\frac{u(t_{n-1})

+

u(t_{n}) }{2},

\end{equation}

and a final rearrangement yields

\begin{equation}\label{eqn:multiphysicsL14:260}

\boxed{

\lr{ G + \frac{2}{\Delta t} C } x_n

=

–

\lr{ G – \frac{2}{\Delta t} C } x_{n_1}

+ B

\lr{u(t_{n-1})

+

u(t_{n}) }.

}

\end{equation}

This is

- also an implicit method.

- requires LU of \( G – 2 C /\Delta t \).

- more accurate than BE, for the same computational cost.

In all of these methods, accumulation of error is something to be very careful of, and in some cases such error accumulation can even be exponential.

This is effectively a way to introduce central differences. On the slides this is seen to be more effective at avoiding either artificial damping and error accumulation that can be seen in backwards and forwards Euler method respectively.