[Click here for a PDF version of this post]

Motivation.

I was asked about geometric algebra equivalents for a couple identities found in [1], one for line integrals

\begin{equation}\label{eqn:more_feynmans_trick:20}

\ddt{} \int_{C(t)} \Bf \cdot d\Bx =

\int_{C(t)} \lr{

\PD{t}{\Bf} + \spacegrad \lr{ \Bv \cdot \Bf } – \Bv \cross \lr{ \spacegrad \cross \Bf }

}

\cdot d\Bx,

\end{equation}

and one for area integrals

\begin{equation}\label{eqn:more_feynmans_trick:40}

\ddt{} \int_{S(t)} \Bf \cdot d\BA =

\int_{S(t)} \lr{

\PD{t}{\Bf} + \Bv \lr{ \spacegrad \cdot \Bf } – \spacegrad \cross \lr{ \Bv \cross \Bf }

}

\cdot d\BA.

\end{equation}

Both of these look questionable at first glance, because neither has boundary term. However, they can be transformed with Stokes theorem to

\begin{equation}\label{eqn:more_feynmans_trick:60}

\ddt{} \int_{C(t)} \Bf \cdot d\Bx

=

\int_{C(t)} \lr{

\PD{t}{\Bf} – \Bv \cross \lr{ \spacegrad \cross \Bf }

}

\cdot d\Bx

+

\evalbar{\Bv \cdot \Bf }{\Delta C},

\end{equation}

and

\begin{equation}\label{eqn:more_feynmans_trick:80}

\ddt{} \int_{S(t)} \Bf \cdot d\BA =

\int_{S(t)} \lr{

\PD{t}{\Bf} + \Bv \lr{ \spacegrad \cdot \Bf }

}

\cdot d\BA

–

\oint_{\partial S(t)} \lr{ \Bv \cross \Bf } \cdot d\Bx.

\end{equation}

The area integral derivative is now seen to be a variation of one of the special cases of the Leibniz integral rule, see for example [2]. The author admits that the line integral relationship is not well used, and doesn’t show up in the wikipedia page.

My end goal will be to evaluate the derivative of a general multivector line integral

\begin{equation}\label{eqn:more_feynmans_trick:100}

\ddt{} \int_{C(t)} F d\Bx G,

\end{equation}

and area integral

\begin{equation}\label{eqn:more_feynmans_trick:120}

\ddt{} \int_{S(t)} F d^2\Bx G.

\end{equation}

We’ve derived that line integral result in a different fashion previously, but it’s interesting to see a different approach. Perhaps this approach will lend itself nicely to non-scalar integrands?

Prerequisites.

Definition 1.1: Convective derivative.

The convective derivative,

of \( \phi(t, \Bx(t)) \) is defined as

\begin{equation*}

\frac{D \phi}{D t} = \lim_{\Delta t \rightarrow 0} \frac{ \phi(t + \Delta t, \Bx + \Delta t \Bv) – \phi(t, \Bx)}{\Delta t},

\end{equation*}

where \( \Bv = d\Bx/dt \).

Theorem 1.1: Convective derivative.

The convective derivative operator may be written

\begin{equation*}

\frac{D}{D t} = \PD{t}{} + \Bv \cdot \spacegrad.

\end{equation*}

Start proof:

Let’s write

\begin{equation}\label{eqn:more_feynmans_trick:140}

\begin{aligned}

v_0 &= 1 \\

u_0 &= t + v_0 h \\

u_k &= x_k + v_k h, k \in [1,3] \\

\end{aligned}

\end{equation}

The limit, if it exists, must equal the sum of the individual limits

\begin{equation}\label{eqn:more_feynmans_trick:160}

\frac{D \phi}{D t} = \sum_{\alpha = 0}^3 \lim_{\Delta t \rightarrow 0} \frac{ \phi(u_\alpha + v_\alpha h) – \phi(t, Bx)}{h},

\end{equation}

but that is just a sum of derivitives, which can be evaluated by chain rule

\begin{equation}\label{eqn:more_feynmans_trick:180}

\begin{aligned}

\frac{D \phi}{D t}

&= \sum_{\alpha = 0}^{3} \evalbar{ \PD{u_\alpha}{\phi(u_\alpha)} \PD{h}{u_\alpha} }{h = 0} \\

&= \PD{t}{\phi} + \sum_{k = 1}^3 v_k \PD{x_k}{\phi} \\

&= \lr{ \PD{t}{} + \Bv \cdot \spacegrad } \phi.

\end{aligned}

\end{equation}

End proof.

Definition 1.2: Hestenes overdot notation.

We may use a dot or a tick with a derivative operator, to designate the scope of that operator, allowing it to operate bidirectionally, or in a restricted fashion, holding specific multivector elements constant. This is called the Hestenes overdot notation.Illustrating by example, with multivectors \( F, G \), and allowing the gradient to act bidirectionally, we have

\begin{equation*}

\begin{aligned}

F \spacegrad G

&=

\dot{F} \dot{\spacegrad} G

+

F \dot{\spacegrad} \dot{G} \\

&=

\sum_i \lr{ \partial_i F } \Be_i G + \sum_i F \Be_i \lr{ \partial_i G }.

\end{aligned}

\end{equation*}

The last step is a precise statement of the meaning of the overdot notation, showing that we hold the position of the vector elements of the gradient constant, while the (scalar) partials are allowed to commute, acting on the designated elements.

We will need one additional identity

Lemma 1.1: Gradient of dot product (one constant vector.)

Given vectors \( \Ba, \Bb \) the gradient of their dot product is given by

\begin{equation*}

\spacegrad \lr{ \Ba \cdot \Bb }

= \lr{ \Bb \cdot \spacegrad } \Ba – \Bb \cdot \lr{ \spacegrad \wedge \Ba }

+ \lr{ \Ba \cdot \spacegrad } \Bb – \Ba \cdot \lr{ \spacegrad \wedge \Bb }.

\end{equation*}

If \( \Bb \) is constant, this reduces to

\begin{equation*}

\spacegrad \lr{ \Ba \cdot \Bb }

=

\dot{\spacegrad} \lr{ \dot{\Ba} \cdot \Bb }

= \lr{ \Bb \cdot \spacegrad } \Ba – \Bb \cdot \lr{ \spacegrad \wedge \Ba }.

\end{equation*}

Start proof:

The \( \Bb \) constant case is trivial to prove. We use \( \Ba \cdot \lr{ \Bb \wedge \Bc } = \lr{ \Ba \cdot \Bb} \Bc – \Bb \lr{ \Ba \cdot \Bc } \), and simply expand the vector, curl dot product

\begin{equation}\label{eqn:more_feynmans_trick:200}

\Bb \cdot \lr{ \spacegrad \wedge \Ba }

=

\Bb \cdot \lr{ \dot{\spacegrad} \wedge \dot{\Ba} }

= \lr{ \Bb \cdot \dot{\spacegrad} } \dot{\Ba} – \dot{\spacegrad} \lr{ \dot{\Ba} \cdot \Bb }. \end{equation}

Rearrangement proves that \( \Bb \) constant identity. The more general statement follows from a chain rule evaluation of the gradient, holding each vector constant in turn

\begin{equation}\label{eqn:more_feynmans_trick:320}

\spacegrad \lr{ \Ba \cdot \Bb }

=

\dot{\spacegrad} \lr{ \dot{\Ba} \cdot \Bb }

+

\dot{\spacegrad} \lr{ \dot{\Bb} \cdot \Ba }.

\end{equation}

End proof.

Time derivative of a line integral of a vector field.

We now have all our tools assembled, and can proceed to evaluate the derivative of the line integral. We want to show that

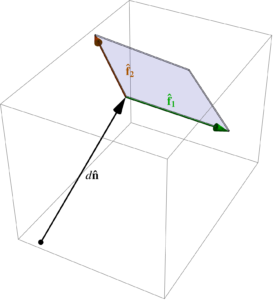

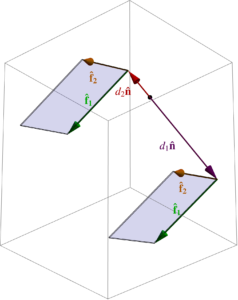

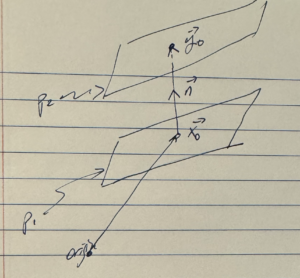

Theorem 1.2:

Given a path parameterized by \( \Bx(\lambda) \), where \( d\Bx = (\PDi{\lambda}{\Bx}) d\lambda \), with points along a \( C(t) \) moving through space at a velocity \( \Bv(\Bx(\lambda)) \), and a vector function \( \Bf = \Bf(t, \Bx(\lambda)) \),

\begin{equation*}

\ddt{} \int_{C(t)} \Bf \cdot d\Bx =

\int_{C(t)} \lr{

\PD{t}{\Bf} + \spacegrad \lr{ \Bf \cdot \Bv } + \Bv \cdot \lr{ \spacegrad \wedge \Bf}

} \cdot d\Bx

\end{equation*}

Start proof:

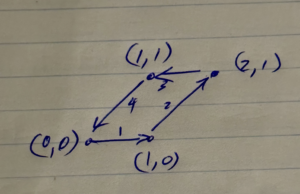

I’m going to avoid thinking about the rigorous details, like any requirements for curve continuity and smoothness. We will however, specify that the end points are given by \( [\lambda_1, \lambda_2] \). Expanding out the parameterization, we seek to evaluate

\begin{equation}\label{eqn:more_feynmans_trick:240}

\int_{C(t)} \Bf \cdot d\Bx

=

\int_{\lambda_1}^{\lambda_2} \Bf(t, \Bx(\lambda) ) \cdot \frac{\partial \Bx}{\partial \lambda} d\lambda.

\end{equation}

The parametric form nicely moves all the boundary time dependence into the integrand, allowing us to write

\begin{equation}\label{eqn:more_feynmans_trick:260}

\begin{aligned}

\ddt{} \int_{C(t)} \Bf \cdot d\Bx

&=

\lim_{\Delta t \rightarrow 0}

\inv{\Delta t}

\int_{\lambda_1}^{\lambda_2}

\lr{ \Bf(t + \Delta t, \Bx(\lambda) + \Delta t \Bv(\Bx(\lambda) ) \cdot \frac{\partial}{\partial \lambda} \lr{ \Bx + \Delta t \Bv(\Bx(\lambda)) } – \Bf(t, \Bx(\lambda)) \cdot \frac{\partial \Bx}{\partial \lambda} } d\lambda \\

&=

\lim_{\Delta t \rightarrow 0}

\inv{\Delta t}

\int_{\lambda_1}^{\lambda_2}

\lr{ \Bf(t + \Delta t, \Bx(\lambda) + \Delta t \Bv(\Bx(\lambda) ) – \Bf(t, \Bx)} \cdot \frac{\partial \Bx}{\partial \lambda} d\lambda \\

&\quad+

\lim_{\Delta t \rightarrow 0}

\int_{\lambda_1}^{\lambda_2}

\Bf(t + \Delta t, \Bx(\lambda) + \Delta t \Bv(\Bx(\lambda) )) \cdot \PD{\lambda}{}\Bv(\Bx(\lambda)) d\lambda \\

&=

\int_{\lambda_1}^{\lambda_2}

\frac{D \Bf}{Dt} \cdot \frac{\partial \Bx}{\partial \lambda} d\lambda +

\lim_{\Delta t \rightarrow 0}

\int_{\lambda_1}^{\lambda_2}

\Bf(t + \Delta t, \Bx(\lambda) + \Delta t \Bv(\Bx(\lambda) \cdot \frac{\partial}{\partial \lambda} \Bv(\Bx(\lambda)) d\lambda \\

&=

\int_{\lambda_1}^{\lambda_2}

\lr{ \PD{t}{\Bf} + \lr{ \Bv \cdot \spacegrad } \Bf } \cdot \frac{\partial \Bx}{\partial \lambda} d\lambda

+

\int_{\lambda_1}^{\lambda_2}

\Bf \cdot \frac{\partial \Bv}{\partial \lambda} d\lambda

\end{aligned}

\end{equation}

At this point, we have a \( d\Bx \) in the first integrand, and a \( d\Bv \) in the second. We can expand the second integrand, evaluating the derivative using chain rule to find

\begin{equation}\label{eqn:more_feynmans_trick:280}

\begin{aligned}

\Bf \cdot \PD{\lambda}{\Bv}

&=

\sum_i \Bf \cdot \PD{x_i}{\Bv} \PD{\lambda}{x_i} \\

&=

\sum_{i,j} f_j \PD{x_i}{v_j} \PD{\lambda}{x_i} \\

&=

\sum_{j} f_j \lr{ \spacegrad v_j } \cdot \PD{\lambda}{\Bx} \\

&=

\sum_{j} \lr{ \dot{\spacegrad} f_j \dot{v_j} } \cdot \PD{\lambda}{\Bx} \\

&=

\dot{\spacegrad} \lr{ \Bf \cdot \dot{\Bv} } \cdot \PD{\lambda}{\Bx}.

\end{aligned}

\end{equation}

Substitution gives

\begin{equation}\label{eqn:more_feynmans_trick:300}

\begin{aligned}

\ddt{} \int_{C(t)} \Bf \cdot d\Bx

&=

\int_{C(t)}

\lr{ \PD{t}{\Bf} + \lr{ \Bv \cdot \spacegrad } \Bf + \dot{\spacegrad} \lr{ \Bf \cdot \dot{\Bv} } } \cdot \frac{\partial \Bx}{\partial \lambda} d\lambda \\

&=

\int_{C(t)}

\lr{ \PD{t}{\Bf}

+ \spacegrad \lr{ \Bf \cdot \Bv }

+ \lr{ \Bv \cdot \spacegrad } \Bf

– \dot{\spacegrad} \lr{ \dot{\Bf} \cdot \Bv }

} \cdot d\Bx \\

&=

\int_{C(t)}

\lr{ \PD{t}{\Bf}

+ \spacegrad \lr{ \Bf \cdot \Bv }

+ \Bv \cdot \lr{ \spacegrad \wedge \Bf }

} \cdot d\Bx,

\end{aligned}

\end{equation}

where the last simplification utilizes lemma 1.1.

End proof.

Since \( \Ba \cdot \lr{ \Bb \wedge \Bc } = -\Ba \cross \lr{ \Bb \cross \Bc } \), observe that we have also recovered \ref{eqn:more_feynmans_trick:20}.

Time derivative of a line integral of a bivector field.

For a bivector line integral, we have

Theorem 1.3:

Given a path parameterized by \( \Bx(\lambda) \), where \( d\Bx = (\PDi{\lambda}{\Bx}) d\lambda \), with points along a \( C(t) \) moving through space at a velocity \( \Bv(\Bx(\lambda)) \), and a bivector function \( B = B(t, \Bx(\lambda)) \),

\begin{equation*}

\ddt{} \int_{C(t)} B \cdot d\Bx =

\int_{C(t)}

\PD{t}{B} \cdot d\Bx + \lr{ d\Bx \cdot \spacegrad } \lr{ B \cdot \Bv } + \lr{ \lr{ \Bv \wedge d\Bx } \cdot \spacegrad } \cdot B.

\end{equation*}

Start proof:

Skipping the steps that follow our previous proceedure exactly, we have

\begin{equation}\label{eqn:more_feynmans_trick:340}

\ddt{} \int_{C(t)} B \cdot d\Bx =

\int_{C(t)}

\PD{t}{B} \cdot d\Bx + \lr{ \Bv \cdot \spacegrad } B \cdot d\Bx + B \cdot d\Bv.

\end{equation}

Since

\begin{equation}\label{eqn:more_feynmans_trick:360}

\begin{aligned}

B \cdot d\Bv

&= B \cdot \PD{\lambda}{\Bv} d\lambda \\

&= B \cdot \PD{x_i}{\Bv} \PD{\lambda}{x_i} d\lambda \\

&= B \cdot \lr{ \lr{ d\Bx \cdot \spacegrad } \Bv },

\end{aligned}

\end{equation}

we have

\begin{equation}\label{eqn:more_feynmans_trick:380}

\ddt{} \int_{C(t)} B \cdot d\Bx

=

\int_{C(t)}

\PD{t}{B} \cdot d\Bx + \lr{ \Bv \cdot \spacegrad } B \cdot d\Bx + B \cdot \lr{ \lr{ d\Bx \cdot \spacegrad } \Bv } \\

\end{equation}

Let’s reduce the two last terms in this integrand

\begin{equation}\label{eqn:more_feynmans_trick:400}

\begin{aligned}

\lr{ \Bv \cdot \spacegrad } B \cdot d\Bx + B \cdot \lr{ \lr{ d\Bx \cdot \spacegrad } \Bv }

&=

\lr{ \Bv \cdot \spacegrad } B \cdot d\Bx –

\lr{ d\Bx \cdot \dot{\spacegrad} } \lr{ \dot{\Bv} \cdot B } \\

&=

\lr{ \Bv \cdot \spacegrad } B \cdot d\Bx

– \lr{ d\Bx \cdot \spacegrad} \lr{ \Bv \cdot B }

+ \lr{ d\Bx \cdot \dot{\spacegrad} } \lr{ \Bv \cdot \dot{B} } \\

&=

\lr{ d\Bx \cdot \spacegrad} \lr{ B \cdot \Bv }

+ \lr{ \Bv \cdot \dot{\spacegrad} } \dot{B} \cdot d\Bx

+ \lr{ d\Bx \cdot \dot{\spacegrad} } \lr{ \Bv \cdot \dot{B} } \\

&=

\lr{ d\Bx \cdot \spacegrad} \lr{ B \cdot \Bv }

+ \lr{ \Bv \lr{ d\Bx \cdot \spacegrad } – d\Bx \lr{ \Bv \cdot \spacegrad } } \cdot B \\

&=

\lr{ d\Bx \cdot \spacegrad} \lr{ B \cdot \Bv }

+ \lr{ \lr{ \Bv \wedge d\Bx } \cdot \spacegrad } \cdot B.

\end{aligned}

\end{equation}

Back substitution finishes the job.

End proof.

Time derivative of a multivector line integral.

Theorem 1.4: Time derivative of multivector line integral.

Given a path parameterized by \( \Bx(\lambda) \), where \( d\Bx = (\PDi{\lambda}{\Bx}) d\lambda \), with points along a \( C(t) \) moving through space at a velocity \( \Bv(\Bx(\lambda)) \), and multivector functions \( M = M(t, \Bx(\lambda)), N = N(t, \Bx(\lambda)) \),

\begin{equation*}

\ddt{} \int_{C(t)} M d\Bx N =

\int_{C(t)}

\frac{D}{D t} M d\Bx N + M \lr{ \lr{ d\Bx \cdot \dot{\spacegrad} } \dot{\Bv} } N.

\end{equation*}

It is useful to write this out explicitly for clarity

\begin{equation}\label{eqn:more_feynmans_trick:420}

\ddt{} \int_{C(t)} M d\Bx N =

\int_{C(t)}

\PD{t}{M} d\Bx N + M d\Bx \PD{t}{N}

+ \dot{M} \lr{ \Bv \cdot \dot{\spacegrad} } N

+ M \lr{ \Bv \cdot \dot{\spacegrad} } \dot{N}

+ M \lr{ \lr{ d\Bx \cdot \dot{\spacegrad} } \dot{\Bv} } N.

\end{equation}

Proof is left to the reader, but follows the patterns above.

It’s not obvious whether there is a nice way to reduce this, as we did for the scalar valued line integral of a vector function, and the vector valued line integral of a bivector function. In particular, our vector and bivector results had \( \spacegrad \lr{ \Bf \cdot \Bv } \), and \( \spacegrad \lr{ B \cdot \Bv } \) terms respectively, which allows for the boundary term to be evaluated using Stokes’ theorem. Is such a manipulation possible here?

Coming later: surface integrals!

References

[1] Nicholas Kemmer. Vector Analysis: A physicist’s guide to the mathematics of fields in three dimensions. CUP Archive, 1977.

[2] Wikipedia contributors. Leibniz integral rule — Wikipedia, the free encyclopedia. https://en.wikipedia.org/w/index.php?title=Leibniz_integral_rule&oldid=1223666713, 2024. [Online; accessed 22-May-2024].

Like this:

Like Loading...