[Click here for a PDF of this post with nicer formatting]

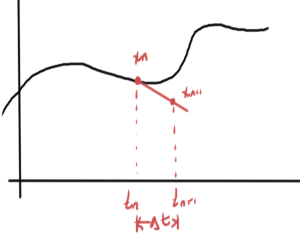

In [1] a verification of the discrete Fourier transform pairs was performed. A much different looking discrete Fourier transform pair is given in [2] $ A.4. This transform pair samples the points at what are called the Nykvist time instants given by

\begin{equation}\label{eqn:discreteFourier:20}

t_k = \frac{T k}{2 N + 1}, \qquad k \in [-N, \cdots N]

\end{equation}

Note that the endpoints of these sampling points are not \( \pm T/2 \), but are instead at

\begin{equation}\label{eqn:discreteFourier:40}

\pm \frac{T}{2} \inv{1 + 1/N},

\end{equation}

which are slightly within the interior of the \( [-T/2, T/2] \) range of interest. The reason for this slightly odd seeming selection of sampling times becomes clear if one calculate the inversion relations.

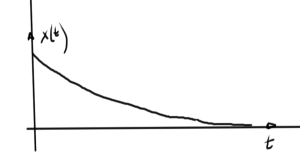

Given a periodic (\( \omega_0 T = 2 \pi \)) bandwith limited signal evaluated only at the Nykvist times \( t_k \),

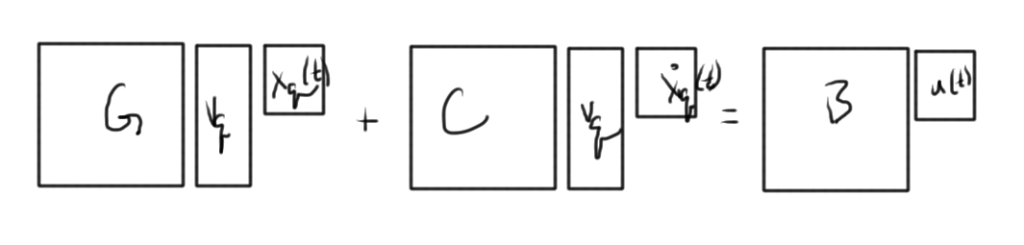

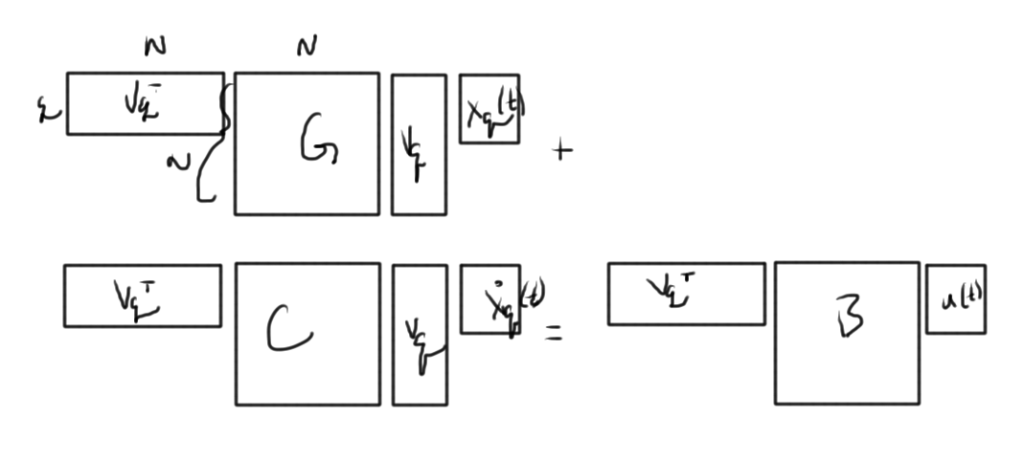

\begin{equation}\label{eqn:discreteFourier:60}

\boxed{

x(t_k) = \sum_{n = -N}^N X_n e^{ j n \omega_0 t_k},

}

\end{equation}

assume that an inversion relation can be found. To find \( X_n \) evaluate the sum

\begin{equation}\label{eqn:discreteFourier:80}

\begin{aligned}

&\sum_{k = -N}^N x(t_k) e^{-j m \omega_0 t_k} \\

\qquad &=

\sum_{k = -N}^N

\lr{

\sum_{n = -N}^N X_n e^{ j n \omega_0 t_k}

}

e^{-j m \omega_0 t_k} \\

\qquad &=

\sum_{n = -N}^N X_n

\sum_{k = -N}^N

e^{ j (n -m )\omega_0 t_k}

\end{aligned}

\end{equation}

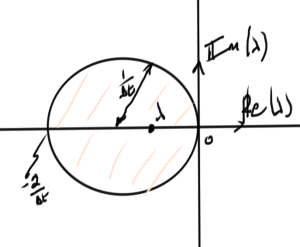

This interior sum has the value \( 2 N + 1 \) when \( n = m \). For \( n \ne m \), and

\( a = e^{j (n -m ) \frac{2 \pi}{2 N + 1}} \), this is

\begin{equation}\label{eqn:discreteFourier:100}

\begin{aligned}

\sum_{k = -N}^N

e^{ j (n -m )\omega_0 t_k}

&=

\sum_{k = -N}^N

e^{ j (n -m )\omega_0 \frac{T k}{2 N + 1}} \\

&=

\sum_{k = -N}^N a^k \\

&=

a^{-N} \sum_{k = -N}^N a^{k+ N} \\

&=

a^{-N} \sum_{r = 0}^{2 N} a^{r} \\

&=

a^{-N} \frac{a^{2 N + 1} – 1}{a – 1}.

\end{aligned}

\end{equation}

Since \( a^{2 N + 1} = e^{2 \pi j (n – m)} = 1 \), this sum is zero when \( n \ne m \). This means that

\begin{equation}\label{eqn:discreteFourier:120}

\sum_{k = -N}^N

e^{ j (n -m )\omega_0 t_k} = (2 N + 1) \delta_{n,m},

\end{equation}

which provides the desired Fourier inversion relation

\begin{equation}\label{eqn:discreteFourier:140}

\boxed{

X_m = \inv{2 N + 1} \sum_{k = -N}^N x(t_k) e^{-j m \omega_0 t_k}.

}

\end{equation}

References

[1] Peeter Joot. Condensed matter physics., appendix: Discrete Fourier transform. 2013. URL https://peeterjoot.com/archives/math2013/phy487.pdf. [Online; accessed 02-December-2014].

[2] Giannini and Leuzzi Nonlinear Microwave Circuit Design. Wiley Online Library, 2004.