[Click here for a PDF version of this post]

Conventional formulation.

The idea behind introducing the scalar potential \( \phi \) and vector potential \( \BA \) is that we can impose a constraint on the form of our observable fields \( \BE, \BB \), (or \( \BD, \BH \)), that reduces the complexity and coupling of Maxwell’s equations. These potentials are not unique, but the types of allowed variations in those potentials (gauge transformations) do not change the observable fields.

The basic idea is that we are looking for representations of the fields that automatically satisfy the pair of source free Maxwell’s equations

\begin{equation}\label{eqn:gapotentials:40}

\begin{aligned}

\spacegrad \cdot \BB &= 0 \\

c \partial_0 \BB + \spacegrad \cross \BE &= 0,

\end{aligned}

\end{equation}

so that the problem is reduced to solving just the remaining source dependent Maxwell’s equations.

The conventional way of constructing these potentials makes use of the identities

\begin{equation}\label{eqn:gapotentials:60}

\begin{aligned}

\spacegrad \cdot \lr{ \spacegrad \cross \Bf } &= 0 \\

\spacegrad \cross \lr{ \spacegrad \chi } &= 0,

\end{aligned}

\end{equation}

where \( \Bf \) is a vector, and \( \chi \) is a scalar. This approach is straightforward. Instead of replicating it, here are a few well known references where such a treatment can be found

- section 18-6 potentials and the wave equation in [2] (available online),

- section 10.1 The potential formulation in [3], and

- section 6.4 Vector and Scalar Potentials, in [4],

Multivector potentials in geometric algebra.

The multivector form of Maxwell’s equation is

\begin{equation}\label{eqn:gapotentials:820}

\lr{ \spacegrad + \partial_0 } F = J,

\end{equation}

where \( \partial_0 = (1/c)\partial/\partial t \), the electromagnetic field \( F = \BE + I c \BB = \BE + I \eta H \) has grades(1,2), and a multivector charge and current density \( J \). Grades(0,1) of the current are the charge and current densities respectively, and if desired, the grade(2,3) portion of the current has the fictitious magnetic charge and current densities (used in microwave and antenna engineering.)

It’s best to consider the case of electric sources, separately from the case of (fictitious) magnetic sources, and then use superposition to construct a potential representation that includes both.

We require a tool, that generalizes the \(\mathbb{R}^3\) cross product curl identities above.

Lemma 1.1: Curl of curl.

Let \( A \in \bigwedge^k \) be a blade of grade \( k \). Then

\begin{equation*}

\nabla \wedge \nabla \wedge A = 0.

\end{equation*}

Observe that for scalar \( A \), this reduces to

\begin{equation}\label{eqn:gapotentials:1740}

\nabla \wedge \nabla A = 0.

\end{equation}

We’ve recently proved this, so we won’t do it again now.

Now we are ready to figure out the structure of the potentials.

Case I. No (fictitious) magnetic sources.

Without magnetic sources, Maxwell’s equation is

\begin{equation}\label{eqn:gapotentials:840}

\lr{ \spacegrad + \partial_0 } F = \gpgrade{J}{0,1},

\end{equation}

This can be split into two equations, one that has just the sources, and one that is source free

\begin{equation}\label{eqn:gapotentials:860}

\gpgrade{ \lr{ \spacegrad + \partial_0 } F }{0,1} = \gpgrade{J}{0,1},

\end{equation}

\begin{equation}\label{eqn:gapotentials:880}

\gpgrade{ \lr{ \spacegrad + \partial_0 } F }{2,3} = 0.

\end{equation}

If you are clever, or have the benefit of having worked out the answer already, you can look directly at \ref{eqn:gapotentials:880} and guess the multivector form for the potential. Hint: you want something closely related to \( F = \lr{ \spacegrad – \partial_0 } A \), where \( A \) has grades(0,1).

If you aren’t that clever, or don’t have a time machine that let’s you look that clever, you’ll have to work it out systematically like the rest of us. We can start by breaking down \( F \) into it’s constituent observer dependent fields. That means that we want to find values for \( \BE, \BH \) that satisfy

\begin{equation}\label{eqn:gapotentials:900}

\gpgrade{ \lr{ \spacegrad + \partial_0 } \lr{ \BE + I \eta \BH } }{2,3} = 0.

\end{equation}

Expanding the multivector factors gives us

\begin{equation}\label{eqn:gapotentials:920}

\begin{aligned}

\gpgrade{ \lr{ \spacegrad + \partial_0 } \lr{ \BE + I \eta \BH } }{2,3}

&=\gpgradetwo{\spacegrad \BE} + \gpgradethree{I \eta \spacegrad \BH} + I \eta \partial 0 \BH \\

&=

\spacegrad \wedge \BE

+ \spacegrad \wedge \lr{ I \eta \BH }

+ I \eta \partial_0 \BH.

\end{aligned}

\end{equation}

Splitting this into one equation for each grade, leaves us with

\begin{equation}\label{eqn:gapotentials:940}

0 = \spacegrad \wedge \BE + I \eta \partial_0 \BH

\end{equation}

\begin{equation}\label{eqn:gapotentials:960}

0 = \spacegrad \wedge \lr{ I \eta \BH }.

\end{equation}

Observe that we could have also written \ref{eqn:gapotentials:960} as \( 0 = I \eta \lr{ \spacegrad \cdot \BH } \), which is the starting point of the conventional non-GA approach.

It’s clear that we want to write \( I \eta \BH = I c \BB \) as a (bivector) curl, and let

\begin{equation}\label{eqn:gapotentials:980}

I \eta \BH = c \spacegrad \wedge \BA.

\end{equation}

It’s a bit sneaky to toss that factor of \( c \) in here, but that’s done to make the units of \( \BA \) turn out in a way that matches the conventional vector potential. If it makes you feel better, you can think of this as an undetermined constant multiplicative undetermined factor that will be used to adjust the dimensions of \( \BA \) down the line.

Having made that choice, \ref{eqn:gapotentials:960} is automatically satisfied, and \ref{eqn:gapotentials:940} is reduced to

\begin{equation}\label{eqn:gapotentials:1000}

\begin{aligned}

0

&= \spacegrad \wedge \BE + I \eta \partial_0 \BH \\

&= \spacegrad \wedge \BE + \partial_0 \spacegrad \wedge \lr{ c \BA } \\

&= \spacegrad \wedge \lr{ \BE + c \partial_0 \BA }.

\end{aligned}

\end{equation}

We can now let

\begin{equation}\label{eqn:gapotentials:1020}

\BE + \partial_0 c \BA = -\spacegrad \phi.

\end{equation}

Again, we had the option of including an arbitrary multiplicative constant, but this time, we managed to find the right switch for our time machine, and look ahead to see that we want that constant to be \( -1 \) in order to have agreement with the conventional result.

We are left with a potential construction for our individual field components

\begin{equation}\label{eqn:gapotentials:1040}

\begin{aligned}

\BE &= -\spacegrad \phi – c \partial_0 \BA \\

I \eta \BH &= c \spacegrad \wedge \BA,

\end{aligned}

\end{equation}

or

\begin{equation}\label{eqn:gapotentials:1060}

F = -\spacegrad \phi – c \partial_0 \BA + c \spacegrad \wedge \BA.

\end{equation}

This automatically satisfies the grades of Maxwell’s equation that are source free, leaving us to solve just

\begin{equation}\label{eqn:gapotentials:1080}

\gpgrade{ \lr{ \spacegrad + \partial_0 } F }{0,1} = \gpgrade{J}{0,1}.

\end{equation}

Multivector potential.

It’s natural to wonder if there is a more structured form for \( F \) than \ref{eqn:gapotentials:1060}, just as we found a GA structure for Maxwell’s equation that eliminated the crazy mix of divs and curls that we had in the original Gibbs representation. Let’s find that structure. To do so, we can enclose \( F \) in a no-op grade selection operation

\begin{equation}\label{eqn:gapotentials:1100}

\begin{aligned}

F

&= \gpgrade{ -\spacegrad \phi – c \partial_0 \BA + c \spacegrad \wedge \BA }{1,2} \\

&= \gpgrade{ -\spacegrad \phi – c \partial_0 \BA + c \spacegrad \BA }{1,2} \\

&= \gpgrade{ \spacegrad \lr{ -\phi + c \BA } – c \partial_0 \BA + \lr{ \partial_0 \phi – \partial_0 \phi } }{1,2} \\

&= \gpgrade{ \lr{ \spacegrad – \partial_0 } \lr{ -\phi + c \BA } }{1,2}.

\end{aligned}

\end{equation}

We can now introduce a multivector potential, and express the remaining non-zero grades of Maxwell’s equation in terms of this potential

\begin{equation}\label{eqn:gapotentials:1120}

\begin{aligned}

A &= -\phi + c \BA \\

F &= \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{1,2} \\

\gpgrade{J}{0,1} &= \gpgrade{ \lr{ \spacegrad + \partial_0 } F }{0,1}.

\end{aligned}

\end{equation}

Lorentz gauge.

The grade selection in our representation of \( F \) is a bit annoying, and can be eliminated if we impose additional constraints on the potential. We can write

\begin{equation}\label{eqn:gapotentials:1140}

F =

\lr{ \spacegrad – \partial_0 } A

–

\gpgrade{ \lr{ \spacegrad – \partial_0 } A }{0,3},

\end{equation}

and then ask what conditions are required for this grade(0,3) selection to be zero. In terms of our constituent potentials, that is

\begin{equation}\label{eqn:gapotentials:1160}

\begin{aligned}

0 &=

\gpgrade{ \lr{ \spacegrad – \partial_0 } A }{0,3} \\

&=

\gpgrade{ \lr{ \spacegrad – \partial_0 } \lr{ -\phi + c \BA } }{0,3} \\

&=

c \spacegrad \cdot \BA + \partial_0 \phi,

\end{aligned}

\end{equation}

This is the Lorentz gauge condition, recognized a bit more easily if written out in terms of the time partials explicitly

\begin{equation}\label{eqn:gapotentials:1180}

\inv{c^2} \PD{t}{\phi} + \spacegrad \cdot \BA = 0.

\end{equation}

We can now write Maxwell’s equations, in the potential formulation, as

\begin{equation}\label{eqn:gapotentials:1200}

\begin{aligned}

A &= -\phi + c \BA \\

F &= \lr{ \spacegrad – \partial_0 } A \\

0 &= \inv{c} \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{0,3} = \inv{c^2} \PD{t}{\phi} + \spacegrad \cdot \BA \\

\gpgrade{J}{0,1} &= \gpgrade{ \lr{ \spacegrad + \partial_0 } F }{0,1} = \lr{ \spacegrad^2 – \partial_{00} } A.

\end{aligned}

\end{equation}

This is quite nice. We have a one to one decoupled relationship between the potential and the current, and are free to use the well known techniques for solving the wave equation (using convolution and a superposition of advanced and retarded Green’s functions for the wave equation operator.)

Gauge transformation.

There’s one more thing that we should look at before moving on to the magnetic sources case, and that’s the question of gauge freedom. We’ve said that the potentials are not unique, but this non-uniqueness has a very specific form.

Since we’ve constructed \( F \) with a grade selection as

\begin{equation}\label{eqn:gapotentials:1220}

F = \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{1,2},

\end{equation}

so it’s clear that any transformation

\begin{equation}\label{eqn:gapotentials:1240}

A \rightarrow A + \lr{ \spacegrad + \partial_0 } \psi_{0,3},

\end{equation}

where \( \psi_{0,3} \) is any multivector with grades(0,3) components, will leave \( F \) invariant. That is

\begin{equation}\label{eqn:gapotentials:1260}

\begin{aligned}

A &= -\phi + c \BA \\

&\rightarrow

-\phi + c \BA + \lr{ \spacegrad + \partial_0 } \psi_{0,3} \\

&=

-\phi + c \BA + \lr{ \spacegrad + \partial_0 } \lr{ c \psi + I \bar{\psi} } \\

&=

\lr{ -\phi + c \partial_0 \psi }

+ c \lr{ \BA + \spacegrad \psi }

+ I \spacegrad \bar{\psi}

+ I \partial_0 \bar{\psi}.

\end{aligned}

\end{equation}

We see that the contributions of \( \bar{\psi} \) result in grade(2,3) terms, which are not of interest, and we find that a paired transformation of the potentials

\begin{equation}\label{eqn:gapotentials:1280}

\begin{aligned}

\phi &\rightarrow \phi – \PD{t}{\psi} \\

\BA &\rightarrow \BA + \spacegrad \psi,

\end{aligned}

\end{equation}

called a gauge transformation, leaves the field \( F \) unchanged. This can be expressed slightly more compactly as

\begin{equation}\label{eqn:gapotentials:1300}

A \rightarrow A + \lr{ \spacegrad + \partial_0 } c \psi,

\end{equation}

where, once again, the multiplicative constant \( c \) is included so for consistency with the conventional expression for potential gauge transformation.

Case II. With (fictitious) magnetic sources.

With magnetic sources, Maxwell’s equation is

\begin{equation}\label{eqn:gapotentials:1500}

\lr{ \spacegrad + \partial_0 } F = \gpgrade{J}{2,3}.

\end{equation}

We put this in dual form

\begin{equation}\label{eqn:gapotentials:1520}

\lr{ \spacegrad + \partial_0 } I F = I \gpgrade{J}{2,3},

\end{equation}

which now has the sources all with grades (0,1) as we just analyzed. The dual vector \( I F \), like \( F \), has only grade(1,2) components.

Expanding the source free Maxwell’s equations in terms of \( \BE, \BH \), we have

\begin{equation}\label{eqn:gapotentials:1340}

\begin{aligned}

0

&= \gpgrade{ \lr{ \spacegrad + \partial_0 } I F}{2,3} \\

&= \gpgrade{ \lr{ \spacegrad + \partial_0 } \lr{I \BE – \eta \BH } }{2,3} \\

&= \gpgrade{ I \spacegrad \BE – \eta \spacegrad \BH + I \partial_0 \BE – \eta \partial_0 \BH }{2,3} \\

&= \spacegrad \wedge \lr{ I \BE } – \eta \spacegrad \wedge \BH + I \partial_0 \BE,

\end{aligned}

\end{equation}

or, by grade

\begin{equation}\label{eqn:gapotentials:1360}

0 = \spacegrad \wedge \lr{ I \BE },

\end{equation}

\begin{equation}\label{eqn:gapotentials:1361}

0 = – \eta \spacegrad \wedge \BH + I \partial_0 \BE.

\end{equation}

We see that the dual electric field needs to be a curl to satisfy \ref{eqn:gapotentials:1360}

\begin{equation}\label{eqn:gapotentials:1400}

I \BE = -\eta \spacegrad \wedge c \BF,

\end{equation}

and after substitution into \ref{eqn:gapotentials:1361} we are left with

\begin{equation}\label{eqn:gapotentials:1540}

\begin{aligned}

0

&= – \eta \spacegrad \wedge \BH + \partial_0 \lr{ – \eta \spacegrad \wedge c \BF } \\

&= \eta \spacegrad \wedge \lr{ -\BH – \partial_0 c \BF } \\

\end{aligned}

\end{equation}

We set

\begin{equation}\label{eqn:gapotentials:1420}

-\BH – \partial_0 c \BF = \spacegrad \phi_m,

\end{equation}

Our fields are

\begin{equation}\label{eqn:gapotentials:1440}

\begin{aligned}

\BE &= – \inv{\epsilon} \spacegrad \cross \BF \\

\BH &= -\spacegrad \phi_m – \PD{t}{\BF}.

\end{aligned}

\end{equation}

This has the structure that matches the potential conventions from antenna theory, for example as stated in [1].

Multivector potential.

As with the electrical sources, we expect that we can write this as something like

\begin{equation}\label{eqn:gapotentials:1460}

F = \gpgrade{ \lr{ \spacegrad – \partial_0 } I A }{1,2}.

\end{equation}

Let’s verify that this is the case.

\begin{equation}\label{eqn:gapotentials:1480}

\begin{aligned}

F

&= I \eta \spacegrad \wedge (c \BF) -I \eta \spacegrad \phi_m – I \eta \partial_0 c \BF \\

&= \gpgrade{ I \eta \spacegrad \wedge (c \BF) -I \eta \spacegrad \phi_m – I \eta \partial_0 c \BF }{1,2} \\

&= \gpgrade{ I \eta \spacegrad c \BF -I \eta \spacegrad \phi_m – I \eta \partial_0 c \BF }{1,2} \\

&= \gpgrade{ I \eta \lr{ \spacegrad \lr{ – \phi_m + c \BF } – \partial_0 c \BF + \partial_0 \phi_m – \partial_0 \phi_m} }{1,2} \\

&= \gpgrade{ \lr{ \spacegrad – \partial_0 } I \eta \lr{ – \phi_m + c \BF } }{1,2}.

\end{aligned}

\end{equation}

Lorentz gauge.

Let’s see what constraints we need to write our field in terms of a potential without a grade selection, that is

\begin{equation}\label{eqn:gapotentials:1560}

F = \lr{ \spacegrad – \partial_0 } I \eta \lr{ – \phi_m + c \BF }.

\end{equation}

We need the grade(0,3) components of this multivector to be zero. Those components are

\begin{equation}\label{eqn:gapotentials:1580}

\begin{aligned}

0 &=

\gpgrade{ \lr{ \spacegrad – \partial_0 } I \eta \lr{ – \phi_m + c \BF }}{0,3} \\

&=

\gpgrade{-\spacegrad I \eta \phi_m+\spacegrad I \eta c \BF+ \partial_0 I \eta \phi_m – \partial_0 I \eta c \BF }{0,3} \\

&=

\gpgradethree{ \spacegrad I \eta c \BF }

+ \partial_0 I \eta \phi_m \\

&=

I \eta \lr{ c \lr{ \spacegrad \cdot \BF} + \partial_0 \phi_m },

\end{aligned}

\end{equation}

or

\begin{equation}\label{eqn:gapotentials:1600}

0 = \inv{c^2} \PD{t}{\phi_m} + \spacegrad \cdot \BF.

\end{equation}

This is the Lorentz gauge condition. With this condition we can we can express Maxwell’s equation with magnetic sources, as a forced wave equation

\begin{equation}\label{eqn:gapotentials:1620}

\begin{aligned}

A &= I \eta \lr{ -\phi_m + c \BF } \\

F &= \lr{ \spacegrad – \partial_0 } A \\

0 &= \inv{c} \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{0,3} = \inv{c^2} \PD{t}{\phi_m} + \spacegrad \cdot \BF \\

\gpgrade{J}{2,3} &= \gpgrade{ \lr{ \spacegrad + \partial_0 } F }{2,3} = \lr{ \spacegrad^2 – \partial_{00} } A.

\end{aligned}

\end{equation}

Gauge transformation.

Without the Lorentz gauge assumption, our potential representation for the field is

\begin{equation}\label{eqn:gapotentials:1640}

\begin{aligned}

A &= I \eta \lr{ -\phi_m + c \BF } \\

F &= \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{1,2}.

\end{aligned}

\end{equation}

It’s clear that any transformation of the form

\begin{equation}\label{eqn:gapotentials:1660}

A \rightarrow A + \lr{ \spacegrad + \partial_0 } \psi_{0,3},

\end{equation}

leaves the field unchanged.

\begin{equation}\label{eqn:gapotentials:1680}

\begin{aligned}

A &= I \eta \lr{ -\phi_m + c \BF } \\

&\rightarrow

I \eta \lr{ -\phi + c \BF } + \lr{ \spacegrad + \partial_0 } \psi_{0,3} \\

&=

I \eta \lr{ -\phi_m + c \BF } + \lr{ \spacegrad + \partial_0 } \lr{ \psi + I \eta c \bar{\psi} } \\

&=

I \eta \lr{

-\phi_m

+ c \partial_0 \bar{\psi}

+ c \BF

+ c \spacegrad \bar{\psi}

}

+ \lr{ \spacegrad + \partial_0 } \psi.

\end{aligned}

\end{equation}

We can drop the \( \psi \) contributions, since this time we want only grades(2,3) in our potential, and find that the

desired form of the gauge transformation, for scalar \( \bar{\psi} \), is

\begin{equation}\label{eqn:gapotentials:1700}

\begin{aligned}

\phi_m &\rightarrow \phi_m – \PD{t}{\bar{\psi}} \\

\BF &\rightarrow \BF + \spacegrad \bar{\psi}.

\end{aligned}

\end{equation}

The multivector form of this is

\begin{equation}\label{eqn:gapotentials:1720}

A \rightarrow A + \lr{ \spacegrad + \partial_0 } I \eta c \bar{\psi}.

\end{equation}

Superposition.

We can now use superposition to construct a potential representation that works for both conventional electric and fictitious magnetic charges and currents.

Without a Lorentz gauge assumption, that is

\begin{equation}\label{eqn:gapotentials:1760}

\begin{aligned}

A &= -\phi + c \BA + I \eta \lr{ -\phi_m + c \BF } \\

F &= \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{1,2} \\

J &= \lr{ \spacegrad + \partial_0 } F,

\end{aligned}

\end{equation}

where, given scalar functions \( \psi, \bar{\psi} \), we are free to make gauge transformations of the multivector potential that satisfy

\begin{equation}\label{eqn:gapotentials:1800}

A \rightarrow A + \lr{ \spacegrad + \partial_0 } \lr{ c \psi + I \eta c \bar{\psi} },

\end{equation}

With a Lorentz gauge constraint, we have a wave equation operator acting on \( A \), with the multivector current as a forcing term.

\begin{equation}\label{eqn:gapotentials:1780}

\begin{aligned}

A &= -\phi + c \BA + I \eta \lr{ -\phi_m + c \BF } \\

0 &= \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{0,3} \\

F &= \lr{ \spacegrad – \partial_0 } A \\

J &= \lr{ \spacegrad^2 – \partial_{00} } A.

\end{aligned}

\end{equation}

Check.

It’s worth expansion to verify that we got all the dimensional constants write, and compare the results to Maxwell’s equations in their Gibbs form.

Let’s start with an expansion of \( F \) in terms of the potentials

\begin{equation}\label{eqn:gapotentials:1820}

\begin{aligned}

F &=

\gpgrade{\lr{ \spacegrad – \partial_0 } A }{1,2} \\

&= \gpgrade{ \lr{ \spacegrad – \partial_0 } \lr{ -\phi + c \BA + I \eta \lr{ -\phi_m + c \BF } } }{1,2} \\

&=

\gpgrade{ \spacegrad \lr{ -\phi + c \BA + I \eta \lr{ -\phi_m + c \BF } } -\partial_0 \lr{ -\phi + c \BA + I \eta \lr{ -\phi_m + c \BF } } }{1,2} \\

&=

\gpgrade{ \spacegrad \lr{ -\phi + c \BA + I \eta \lr{ -\phi_m + c \BF } } -\partial_0 \lr{ c \BA + I \eta c \BF } }{1,2} \\

&=

-\spacegrad \phi + c \spacegrad \wedge \BA – I \eta \spacegrad \phi_m + I \eta c \spacegrad \wedge \BF

-\partial_0 \lr{ c \BA + I \eta c \BF }.

\end{aligned}

\end{equation}

That is

\begin{equation}\label{eqn:gapotentials:1840}

\begin{aligned}

\BE &= -\spacegrad \phi + I \eta c \spacegrad \wedge \BF -c \partial_0 \BA \\

I \eta \BH &= c \spacegrad \wedge \BA – I \eta \spacegrad \phi_m – I \eta c \partial_0 \BF,

\end{aligned}

\end{equation}

or

\begin{equation}\label{eqn:gapotentials:1860}

\begin{aligned}

\BE &= – \spacegrad \phi -\partial_t \BA – \inv{\epsilon} \spacegrad \cross \BF \\

\BH &= – \spacegrad \phi_m – \partial_t \BF + \inv{\mu} \spacegrad \cross \BA.

\end{aligned}

\end{equation}

All is good. This is exactly the form that we expect.

Let’s expand out Maxwell’s equation in terms of this potential representation and see what we get.

Let’s write the total field without the grade(1,2) selection, by subtracting off any grade(0,3) contributions

\begin{equation}\label{eqn:gapotentials:1880}

F = \lr{ \spacegrad – \partial_0 } A – \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{0,3}.

\end{equation}

That difference term is

\begin{equation}\label{eqn:gapotentials:1900}

\begin{aligned}

– \gpgrade{ \lr{ \spacegrad – \partial_0 } A }{0,3}

&=

– \gpgrade{ \lr{ \spacegrad – \partial_0 } \lr{ -\phi + c \BA – I \eta \phi_m + I \eta c \BF } }{0,3} \\

&=

– c \spacegrad \cdot \BA – I \eta c \spacegrad \cdot \BF – \partial_0 \phi – I \eta \partial_0 \phi_m.

\end{aligned}

\end{equation}

The field is nicely split into a multivector term that depends directly on the full multivector potential \( A \), and a difference term that wipes out any scalar and pseudoscalar terms

\begin{equation}\label{eqn:gapotentials:1920}

F

=

\lr{ \spacegrad – \partial_0 } A

– \lr{ \partial_0 \phi + c \spacegrad \cdot \BA } – I \eta \lr{ \partial_0 \phi_m + c \spacegrad \cdot \BF }.

\end{equation}

Maxwell’s equations are now reduced to

\begin{equation}\label{eqn:gapotentials:1940}

\lr{ \spacegrad^2 – \partial_{00} } A

–

\lr{ \spacegrad + \partial_0 }

\lr{ \partial_0 \phi + c \spacegrad \cdot \BA }

–

\lr{ \spacegrad + \partial_0 }

I \eta \lr{ \partial_0 \phi_m + c \spacegrad \cdot \BF }

= J.

\end{equation}

This splits nicely into a single equation for each grade of \( A, J \) respectively. We write

\begin{equation}\label{eqn:gapotentials:1960}

J = \eta\lr{ c \rho – \BJ } + I \lr{ c \phi_m – \BM },

\end{equation}

so

\begin{equation}\label{eqn:gapotentials:1980}

\begin{aligned}

\lr{ \spacegrad^2 – \partial_{00} } (-\phi) – \partial_0 \lr{ \partial_0 \phi + c \spacegrad \cdot \BA } &= \eta c \rho \\

\lr{ \spacegrad^2 – \partial_{00} } (c \BA) – \spacegrad \lr{ \partial_0 \phi + c \spacegrad \cdot \BA } &= -\eta \BJ \\

\lr{ \spacegrad^2 – \partial_{00} } (I \eta c \BF) – I \eta \partial_0 \lr{ \partial_0 \phi_m + c \spacegrad \cdot \BF } &= -I \BM \\

\lr{ \spacegrad^2 – \partial_{00} } (-I \eta \phi_m) – I \eta \spacegrad \lr{ \partial_0 \phi_m + c \spacegrad \cdot \BF } &= I c \rho_m.

\end{aligned}

\end{equation}

If we choose the Lorentz gauge conditions

\begin{equation}\label{eqn:gapotentials:2000}

0 = \lr{ \partial_0 \phi + c \spacegrad \cdot \BA } = \lr{ \partial_0 \phi_m + c \spacegrad \cdot \BF },

\end{equation}

all of these equations decouple nicely, leaving us with 8 (scalar) equations in 8 unknowns

\begin{equation}\label{eqn:gapotentials:2020}

\begin{aligned}

\lr{ \spacegrad^2 – \partial_{00} } \phi &= -\frac{\rho}{\epsilon} \\

\lr{ \spacegrad^2 – \partial_{00} } \BA &= -\mu \BJ \\

\lr{ \spacegrad^2 – \partial_{00} } \BF &= -\epsilon \BM \\

\lr{ \spacegrad^2 – \partial_{00} } \phi_m &= – \frac{\rho_m}{\mu}.

\end{aligned}

\end{equation}

Potentials in STA (space time algebra).

All of this was very convoluted. Maxwell’s equation in STA form is considerably simpler, as is the potential formulation.

STA form of Maxwell’s equation.

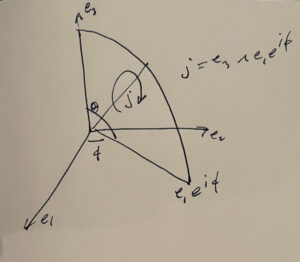

We identify

\begin{equation}\label{eqn:gapotentials:2040}

\begin{aligned}

\Be_k &= \gamma_k \gamma_0 \\

I &= \Be_1 \Be_2 \Be_3 = \gamma_0 \gamma_1 \gamma_2 \gamma_3 \\

\gamma^\mu \cdot \gamma_\nu &= {\delta^\mu}_\nu.

\end{aligned}

\end{equation}

Our field multivector

\begin{equation}\label{eqn:gapotentials:2060}

\begin{aligned}

F

&= \BE + I \eta \BH \\

&= \gamma_{k0} E^k + \eta \gamma_{0123k0} H^k \\

&= \gamma_{k0} E^k + \eta \gamma_{123k} H^k,

\end{aligned}

\end{equation}

now has a pure bivector representation in STA (since \( k \) will always clobber one of the \( 1,2,3 \) indexes.) To find the STA representation of Maxwell’s equation, we simply multiply both sides of our multivector representation, from the left, by \( \gamma_0 \).

\begin{equation}\label{eqn:gapotentials:2080}

\gamma_0 \lr{ \spacegrad + \partial_0 } F = \gamma_0 \lr{ \eta \lr{ c \rho – \BJ } + I \lr{ c \rho_m – \BM } }.

\end{equation}

The LHS is just the spacetime gradient of \( F \), which we can see by expanding the product

\begin{equation}\label{eqn:gapotentials:2100}

\begin{aligned}

\gamma_0 \lr{ \spacegrad + \partial_0 }

&=

\gamma_0 \lr{ \gamma_{k0} \PD{x^k}{} + \PD{x^0}{} } \\

&=

-\gamma_{k} \PD{x^k}{} + \gamma_0 \PD{x^0}{}.

\end{aligned}

\end{equation}

This is the spacetime gradient

\begin{equation}\label{eqn:gapotentials:2120}

\grad \equiv \gamma^k \PD{x^k}{} + \gamma^0 \PD{x^0}{} = \gamma^\mu \partial_\mu.

\end{equation}

Our RHS is

\begin{equation}\label{eqn:gapotentials:2140}

\begin{aligned}

\gamma_0 \lr{ \eta \lr{ c \rho – \BJ } + I \lr{ c \rho_m – \BM } }

&=

\gamma_0 \frac{\rho}{\epsilon} – \gamma_{0k0} \eta (\BJ \cdot \Be_k)

– I \lr{ c \rho_m \gamma_0 – \gamma_{0k0} (\BM \cdot \Be_k) } \\

&=

\gamma_0 \frac{\rho}{\epsilon} + \gamma_k \eta (\BJ \cdot \Be_k)

– I \lr{ c \rho_m \gamma_0 + \gamma_{k} (\BM \cdot \Be_k) }.

\end{aligned}

\end{equation}

If we let

\begin{equation}\label{eqn:gapotentials:2160}

\begin{aligned}

J_e^0 &= \frac{\rho}{\epsilon} \\

J_e^k &= \eta (\BJ \cdot \Be_k) \\

J_m^0 &= c \rho_m \\

J_m^k &= (\BM \cdot \Be_k) \\

J_e &= J_e^\mu \gamma_\mu \\

J_m &= J_m^\mu \gamma_\mu,

\end{aligned}

\end{equation}

then we are left with

\begin{equation}\label{eqn:gapotentials:2180}

\grad F = J_e – I J_m,

\end{equation}

or just

\begin{equation}\label{eqn:gapotentials:2640}

\grad F = J,

\end{equation}

where we now give a different meaning to \( J \) than we had in the multivector formulation. This \( J \) is now a multivector with grade(1,3) components.

Case I: potential formulation for conventional sources.

Much like we did with to find the potential formulation for the multivector form of Maxwell’s equation, we use superposition, and tackle the conventional sources, and fictitious magnetic sources separately.

With no fictitious sources, Maxwell’s equation is

\begin{equation}\label{eqn:gapotentials:2200}

\grad F = J_e,

\end{equation}

which we may split into vector and trivector components

\begin{equation}\label{eqn:gapotentials:2220}

\begin{aligned}

\grad \cdot F &= J_e \\

\grad \wedge F &= 0.

\end{aligned}

\end{equation}

Clearly, the trivector equation can be satified by setting

\begin{equation}\label{eqn:gapotentials:2240}

F = \grad \wedge A,

\end{equation}

for some vector \( A \). We may also make gauge transformations of \( A \) of the form

\begin{equation}\label{eqn:gapotentials:2260}

A \rightarrow A + \grad \psi,

\end{equation}

without changing \( F \), showing that \( A \) is not uniquely determined. With such a representation, Maxwell’s equation is now reduced to

\begin{equation}\label{eqn:gapotentials:2280}

\grad \cdot F = J_e,

\end{equation}

or

\begin{equation}\label{eqn:gapotentials:2300}

\begin{aligned}

J_e

&=

\grad \cdot \lr{ \grad \wedge A } \\

&=

\grad^2 A – \grad \lr{ \grad \cdot A }.

\end{aligned}

\end{equation}

Clearly the equivalent of the Lorentz gauge condition is now just

\begin{equation}\label{eqn:gapotentials:2320}

\grad \cdot A = 0,

\end{equation}

so the Lorentz gauge potential form of Maxwell’s equation is just

\begin{equation}\label{eqn:gapotentials:n}S

\grad^2 A = J_e.

\end{equation}

Case II: potential formulation for fictitious sources.

If we have only fictious sources, Maxwell’s equation is

\begin{equation}\label{eqn:gapotentials:2340}

\grad F = -I J_m,

\end{equation}

or after left multiplication by \( I \) we have

\begin{equation}\label{eqn:gapotentials:2360}

\grad I F = J_m.

\end{equation}

Let \( G = I F \), for the dual field, which is still a bivector. As before, we can split Maxwell’s equations into vector and trivector compoents

\begin{equation}\label{eqn:gapotentials:2380}

\begin{aligned}

\grad \cdot G &= J_m \\

\grad \wedge G &= 0.

\end{aligned}

\end{equation}

We may set

\begin{equation}\label{eqn:gapotentials:2400}

G = \grad \wedge K,

\end{equation}

for vector \( K \). Maxwell’s equation is now reduced to

\begin{equation}\label{eqn:gapotentials:2420}

\grad \cdot G = J_m,

\end{equation}

or

\begin{equation}\label{eqn:gapotentials:2440}

\begin{aligned}

J_m

&=

\grad \cdot \lr{ \grad \wedge K } \\

&=

\grad^2 K – \grad \lr{ \grad \cdot K }.

\end{aligned}

\end{equation}

As before we may make gauge transformations by adding gradient to our potential

\begin{equation}\label{eqn:gapotentials:2460}

K \rightarrow K + \grad \bar{\psi},

\end{equation}

which will not change \( G \). For such sources, the Lorentz gauge condition is \( \grad \cdot K = 0 \). With the Lorentz gauge, Maxwell’s equation is reduced to

\begin{equation}\label{eqn:gapotentials:2480}

\grad^2 K = J_m.

\end{equation}

Superposition.

For non-fictious sources, we have

\begin{equation}\label{eqn:gapotentials:2500}

F = \grad \wedge A

\end{equation}

and for fictious sources, we have

\begin{equation}\label{eqn:gapotentials:2520}

I F = G = \grad \wedge K,

\end{equation}

or

\begin{equation}\label{eqn:gapotentials:2540}

F = -I G = -I \lr{ \grad \wedge K }.

\end{equation}

Combining these results, we have

\begin{equation}\label{eqn:gapotentials:2560}

\begin{aligned}

F

&= \grad \wedge A -I \lr{ \grad \wedge K } \\

&= \gpgradetwo{ \grad \wedge A -I \lr{ \grad \wedge K } } \\

&= \gpgradetwo{ \grad A -I \lr{ \grad K } } \\

&= \gpgradetwo{ \grad \lr{ A + I K } },

\end{aligned}

\end{equation}

or

\begin{equation}\label{eqn:gapotentials:2580}

F = \grad \lr{ A + I K } – \gpgrade{ \grad \lr{ A + I K } }{0,4}.

\end{equation}

Maxwell’s equation is

\begin{equation}\label{eqn:gapotentials:2600}

\grad^2 \lr{ A + I K } – \grad \gpgrade{ \grad \lr{ A + I K } }{0,4} = J.

\end{equation}

With the Lorentz gauge, this splits nicely into one forced wave equation for each vector potential

\begin{equation}\label{eqn:gapotentials:2620}

\begin{aligned}

\grad^2 A &= J_e \\

\grad^2 K &= -J_m.

\end{aligned}

\end{equation}

References

[1] Constantine A Balanis. Antenna theory: analysis and design. John Wiley & Sons, 3rd edition, 2005.

[2] R.P. Feynman, R.B. Leighton, and M.L. Sands. Feynman lectures on physics, Volume II.[Lectures on physics], chapter The Maxwell Equations. Addison-Wesley Publishing Company. Reading, Massachusetts, 1963. URL https://www.feynmanlectures.caltech.edu/II_18.html.

[3] David Jeffrey Griffiths and Reed College. Introduction to electrodynamics. Prentice hall Upper Saddle River, NJ, 3rd edition, 1999.

[4] JD Jackson. Classical Electrodynamics. John Wiley and Sons, 2nd edition, 1975.